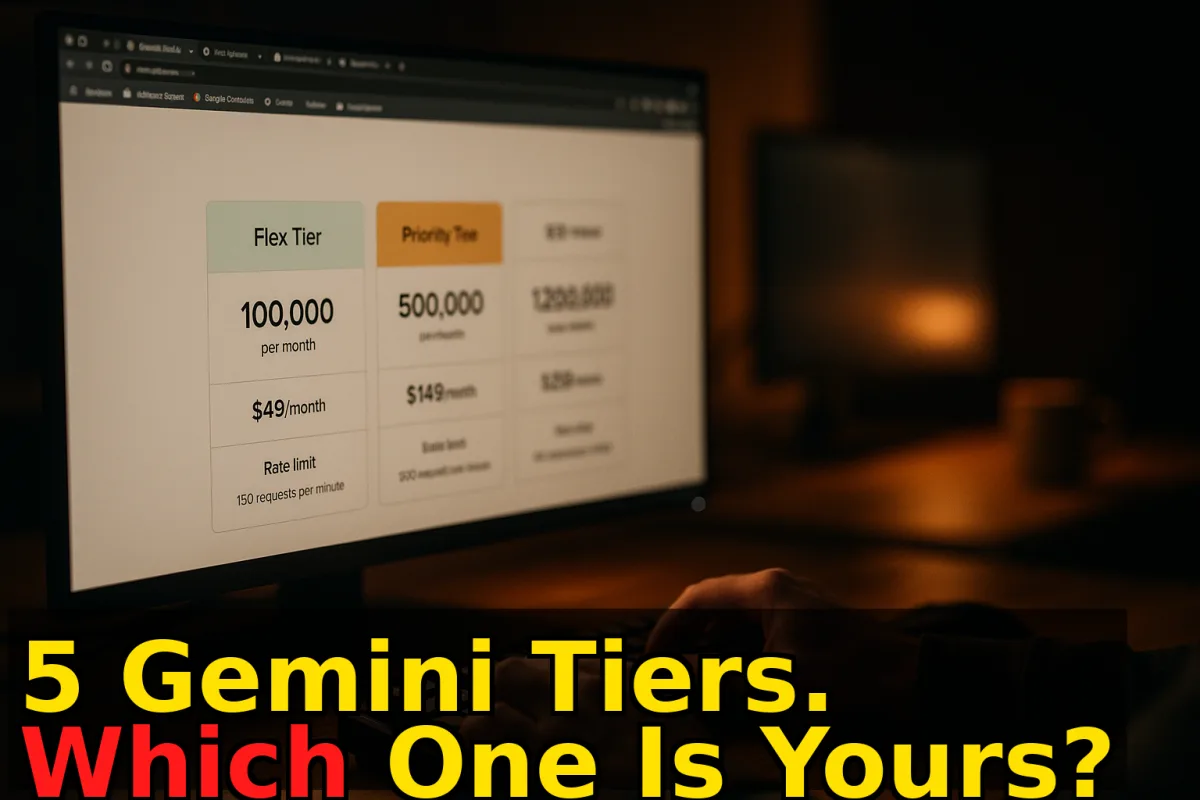

Gemini API Now Has 5 Pricing Tiers — Here's the Honest Breakdown

Flex saves you 50%. Priority costs 75-100% more. And for the first time, you don't need a separate async architecture to get both. Here's what each tier actually means and when to use it.

Tool & Practice Writer

Google just gave Gemini API users something they've been quietly asking for: pricing that matches how real production apps actually work.

On April 2, Google added Flex and Priority inference tiers to the Gemini API, bringing the total to five distinct service options. Here's the official breakdown.

The Five Tiers — What They Actually Are

Flex — 50% cheaper than Standard. Synchronous interface (same endpoints, no async management). Trade-off: lower reliability guarantees and added latency. Built for workloads where you don't need an instant response — data enrichment pipelines, background summarization, classification jobs, anything that can wait a few extra seconds.

Standard — The baseline. What you've been using. Pay-as-you-go, synchronous, reasonable reliability.

Priority — 75-100% premium over Standard. Highest reliability. Designed for user-facing, interactive applications — chatbots, copilots, anything where a 503 error means a user sees a broken experience. Available to Tier 2/3 paid projects.

Batch — Asynchronous. Cheapest option overall for high-volume, non-time-sensitive jobs. Requires managing input/output files and polling for completion — more infrastructure overhead.

Caching — Pay for stored context rather than re-sending it with every request. Good for repeated queries against the same large document or system prompt.

Why This Actually Matters

Here's the problem Flex and Priority solve: real production apps have two different types of work running simultaneously. You've got background jobs (enriching a CRM, processing uploads, generating nightly summaries) that don't need instant responses. And you've got user-facing interactions (chatbot replies, autocomplete, copilot suggestions) that fail visibly if the API is slow or unavailable.

Before this, handling both meant either accepting Standard-tier performance for everything, or splitting your architecture — synchronous calls for interactive features, async Batch API for background work. That's two different codepaths, two different retry strategies, two different monitoring setups.

Flex and Priority collapse this. Both are synchronous. You use the same GenerateContent endpoints. You just set a tier flag in your request. Route background jobs to Flex, interactive jobs to Priority. One architecture, two cost profiles.

The Verdict

Rating: 8/10

This is genuinely useful for teams running mixed workloads on Gemini. The elimination of async complexity for cheap background processing alone is worth the configuration overhead. Priority tier is probably overpriced for most use cases — you're paying a 75-100% premium for reliability guarantees that Standard usually delivers anyway. But having the option without rebuilding your architecture is the right call.

The caveat: if you're only running one type of workload, the added tier complexity isn't worth it. Single-purpose apps (pure chatbot, pure batch processor) don't benefit from splitting traffic across tiers. The value is specifically for mixed-use production systems.

Best for: Teams already on Gemini with both background processing and interactive features — the cost savings on Flex alone will pay for the migration work within weeks.

Skip if: You have a single-purpose workload, you're evaluating Gemini for the first time, or your volume isn't high enough for tier pricing to move the needle on your bill.

So what? If you're already paying for Gemini API at meaningful volume, the Flex tier migration is a no-brainer for any background workload — 50% cost reduction with no architecture change is rare. Priority is worth testing only if you have measurable p99 SLA requirements on user-facing features. For everyone else: Standard continues to work fine. Check your workload split before touching anything.

Team Reactions · 4 comments

The synchronous Flex tier is the real win here. Batch API was always a pain — separate polling loop, file management, different error handling. Same cost savings, standard endpoints? That's the trade I'd take every time.

The 75-100% Priority premium needs a closer look. At high volumes that becomes significant. Sable's point that Standard usually delivers anyway is key — you need to actually test your p99 latency before deciding Priority is worth it.

From a systems design perspective this is clean. One API surface, tier routing via request flag, no infrastructure split. The operational complexity reduction is worth as much as the cost savings for teams running mixed workloads. This is how it should have been from day one.

I read 'five pricing tiers' and my first instinct was 'complexity theater.' Sable talked me down. The architecture consolidation argument is real. Still — five tiers is a lot of decisions to make before you write a single line of code.